AI Companies' Safety Claims: A Critical Evaluation

The AI industry has made significant progress in recent years, with many companies developing powerful models that can perform complex tasks. However, as these models become more sophisticated, concerns have grown about their safety and potential misuse.

A few months ago, AI companies claimed that their most powerful models did not have dangerous capabilities. However, recent reports suggest that some of these companies may have been exaggerating or downplaying the risks associated with their models.Current State of AI Safety Claims

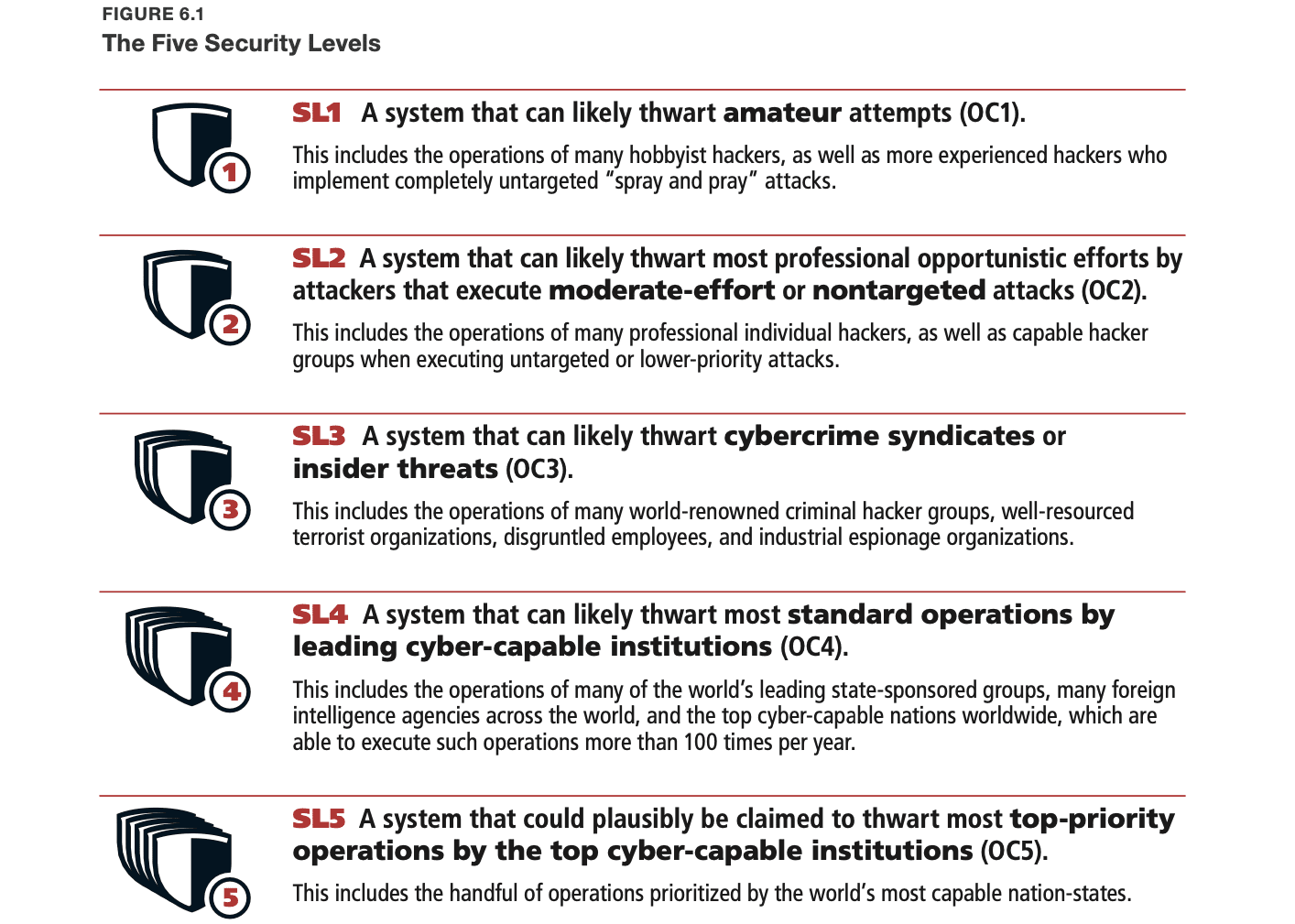

Anthropic, OpenAI, Google DeepMind, and xAI are four of the companies that have made such claims. While they acknowledge that their most powerful models might have dangerous capabilities, they argue that their safeguards can prevent misuse via API and model weight theft.

However, many experts believe that these safeguards are not sufficient to prevent all types of misuse. For example, even if a model's weights are stolen or leaked, it could still be used to create bioweapons or cause harm to humans.

To evaluate the safety claims made by these companies, we need to examine their security measures and safeguards. While some of them have published reports on their safety standards, many others lack transparency and clarity.Evaluating the Safety Claims

For example, xAI has not provided any information about its security measures or how it plans to prevent misuse via API. Similarly, DeepMind's report on its safety standards is almost devoid of details.

While the risks associated with AI misuse are still being researched and debated, many experts agree that they pose a significant threat to humanity. If an AI system becomes misaligned or causes harm to humans, it could have devastating consequences.The Risks Associated with AI Misuse

For example, if a model is used to create bioweapons or cause harm to humans, it could lead to widespread suffering and death. Similarly, if a model becomes misaligned during internal deployment or hacking, it could pose a significant risk to national security and global stability.

In conclusion, while AI companies have made some progress in developing powerful models that can perform complex tasks, many of their safety claims are still unproven or dubious. To mitigate the risks associated with AI misuse, these companies need to develop more robust security measures and safeguards.Conclusion

Furthermore, it is essential for the industry as a whole to prioritize transparency and clarity when discussing AI safety standards. By doing so, we can ensure that the benefits of AI development are shared by all, while minimizing the risks associated with its misuse.

To improve the safety of AI models, we recommend that companies prioritize transparency and clarity when discussing their safety standards. We also recommend that they develop more robust security measures and safeguards to prevent misuse via API and model weight theft.Recommendations

Furthermore, we recommend that regulatory bodies take a proactive approach to ensuring the safety of AI systems. This could include developing clear guidelines and regulations for the development and deployment of AI models.

For more information on AI safety standards and security measures, please visit our resources page at aisafetyclaims.org.Resources